This article explores how Node.js web applications can be scaled across multiple CPU cores and even machines using Docker.

Introduction

Deploying and scaling a Node.js web app (like a Next.js app) is easier than ever thanks to the cloud and serverless!

But what if you still want to be in charge of your own server architecture? Or maybe you just want to pay for your server resources and not your traffic or execution time?

Deploying your web app on a VPS (Virtual Private Server) is the perfect option in that case!

The problem with Node.js and single-threading

Node.js is single-threaded by nature. That means that it can usually only utilize one CPU core/thread and only achieve “concurrency” by switching between tasks on that single thread (the so called “Event Loop”).

But what if your server has more than one CPU core? How can you leverage those to be able to handle more incoming traffic?

Utilizing multiple CPU cores with Node.js

Despite its single-threaded nature, Node.js still allows you to utilize multiple CPU cores.

Node.js introduced “cluster mode” to achieve some level of “multi-threading“. However, because Node.js is still a single-threaded runtime, all cluster mode does is running multiple instances of your app each having their own interpreter/runtime.

There are also very popular third-party libraries like “pm2” that implement this concept. pm2 also has built-in load-balancing, so it’s definitely worth checking out!

Multiple Node.js instances with Docker Compose

If you’re already using Docker and Docker Compose in your setup, it can be a great alternative to skip implementing cluster mode or pm2 altogether.

You can just use Docker Compose to create multiple replicas of your Node.js app (a Next.js app in my case).

The docker-compose.yml

Docker Compose makes it super easy to create multiple containers running the same service (“replicas“). They can all utilize a different CPU core without the need for any tools or additional code in your app.

It just requires a little edit of the docker-compose.yml:

version: '3.7'

services:

your-web-app:

image: registry/.../your-website-image:latest

...

deploy:

replicas: 3

...

Simply add the deploy key to the service you want to replicate and specify the amount of replicas you wish (just like in the example above).

Load Balancing

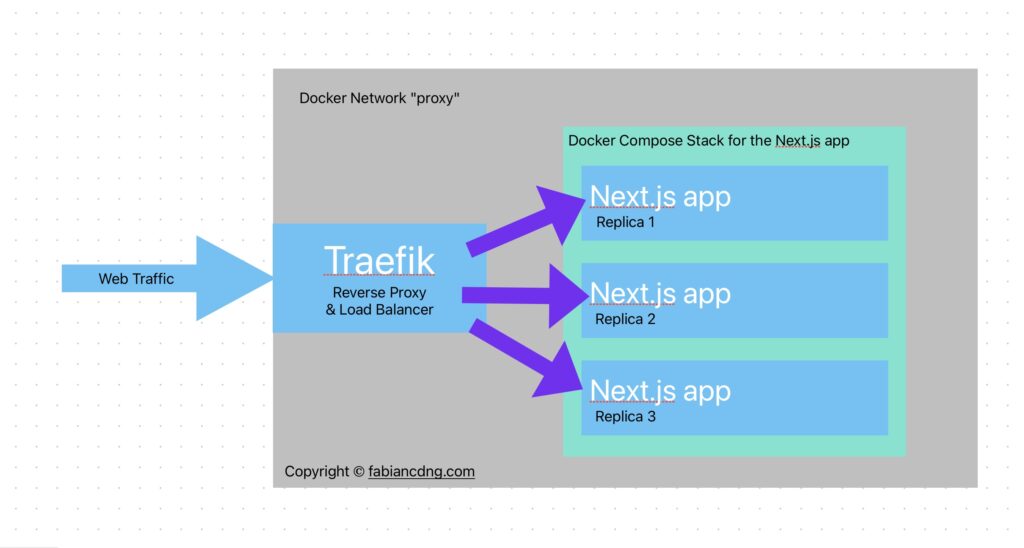

The last thing you have to figure out now is load balancing. After starting your Docker Compose stack, there are three containers running the same application. Your reverse proxy or load balancer needs to evenly send requests to one of the replicas.

For an example setup see the example below.

A real-world example: This website

Let’s take a look at my website fabiancdng.com as a real-world example:

The hosting requirements

My website is a Next.js application that uses Server Side Rendering (SSR) on some pages. Therefore, it needs the Node.js runtime and can’t just be deployed as static assets.

I deployed the site in a Docker container (with Docker Compose) on a cheap VPS with 6 virtual CPU cores.

In configured Docker Compose to run three replicas of the Next.js app (all in their own container).

For routing and distributing incoming traffic evenly across the replicas, I need a reverse proxy that also acts as a load balancer.

The reverse proxy and load balancer

I use Traefik as a reverse proxy that takes all the incoming traffic to http(s)://*.fabiancdng.com/* and routes it to the corresponding Docker container within the internal Docker network.

NGINX is another popular solution for this purpose. And both NGINX and Traefik support load balancing.

In my case, Traefik supports load balancing between replicas of the same service out of the box so there was no additional configuration needed.

Once you’ve got your load balancing between the replicas in place, your traffic will be distributed among CPU cores, making your service able to handle many more incoming requests. 🥳 🎉

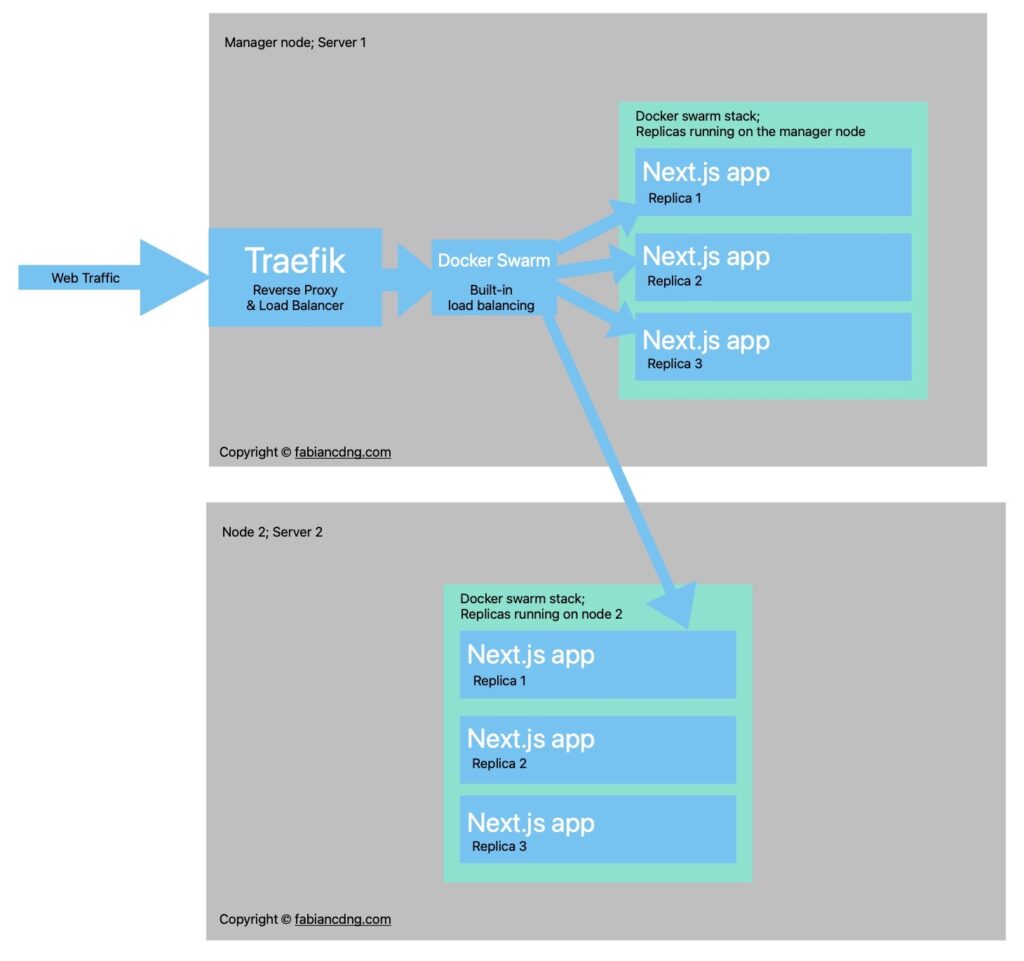

A look ahead: Scaling horizontally across machines

If you run this configuration using a container orchestration tool like Kubernetes or Docker Swarm, you can even scale your app horizontally this way. You can distribute the load not just across replicas on one server, you can have a ton of replicas running on a ton of different servers.

Docker Swarm has built-in load balancing between nodes. So if you plan on doing this, that might be worth checking out.

However, if your application has reached an amount traffic that is worth distributing across multiple machines, you might just consider moving to the cloud and a managed infrastructure.

Conclusion

Whether this is a good alternative to just moving to a managed service like Vercel or AWS Amplify (when deploying a Next.js app, for instance) or a managed container orchestration service is hard to tell…

Even though those services can get quite expensive for high-traffic sites, they often offer generous free-tiers and pay-as-you-go models. Also, they guarantee availability all around the globe thanks to edge routing and CDNs.

However, you can scale a loooong way with just a cheap VPS and you can protect yourself from unexpected costs for traffic or execution time. Also, you can deploy many different services on a single machine (maybe you would like a database or a free, open-source analytics tool as well?) and you can learn a lot about server administration, Docker and the complexity behind web applications and their architecture.

My recommendation: If you plan on building a full-stack side project or small SaaS and you need to deploy front end, back end, database, caching layer, etc., use a VPS, if you are okay with the additional configuration effort.

If you need to scale and you’re just getting started with networking and backend engineering… Don’t even bother with tools like Kubernetes. The growing complexity and maintenance effort to just keep your service running is likely not worth your time. Focus on building the app rather than the architecture around it and just use a cloud service.

Cheers.